Bending the Wrong Curve: the IPCC’s Ecocidal Strategy

By Frank Rotering | February 1, 2018

Image by NiklasPntk from Pixabay

Climate scientists have done an admirable job of researching the environmental problems resulting from excess greenhouse gases (GHGs), but they have failed to guide society towards rational solutions. Here I address the IPCC‘s central role in this failure. My claim is that the distortions of climate science are rooted in the organization’s ecocidal strategy: to bend the emissions curve to zero rather than the concentrations curve to a safe level.

Understanding this strategy and how it came about requires some basic knowledge about the history of both the IPCC and the UNFCCC (UN Framework Convention on Climate Change). The latter is the agreement that underpins the international negotiations to address global warming. Let me do a quick run-through and then discuss some important details.

The IPCC was established by two UN organizations in 1988. In endorsing this step, the UN General Assembly urged the new organization to provide international coordination for assessing the magnitude and impacts of global warming, and to offer “realistic response strategies.” The IPCC delivered its first Assessment Report (AR1) in 1990. Based on the concerns expressed there, the UN in 1992 tried to spur concrete action through the UNFCCC. This agreement came into force in 1994 and now has wide support among nations. The IPCC published its second Assessment Report (AR2) in 1995 and followed this with AR3 in 2001, AR4 in 2007, and AR5 in 2014. The sixth report, which is now being prepared, is scheduled for publication in 2021.

The UNFCCC document is highly significant because it outlines a strategic approach that the IPCC would subsequently interpret and apply. Its key section is Article 2:

“The ultimate objective … is to achieve … stabilization of greenhouse gas concentrations in the atmosphere at a level that would prevent dangerous anthropogenic [human-caused] interference with the climate system. Such a level should be achieved within a time-frame sufficient to allow ecosystems to adapt naturally to climate change, to ensure that food production is not threatened and to enable economic development to proceed in a sustainable manner.”

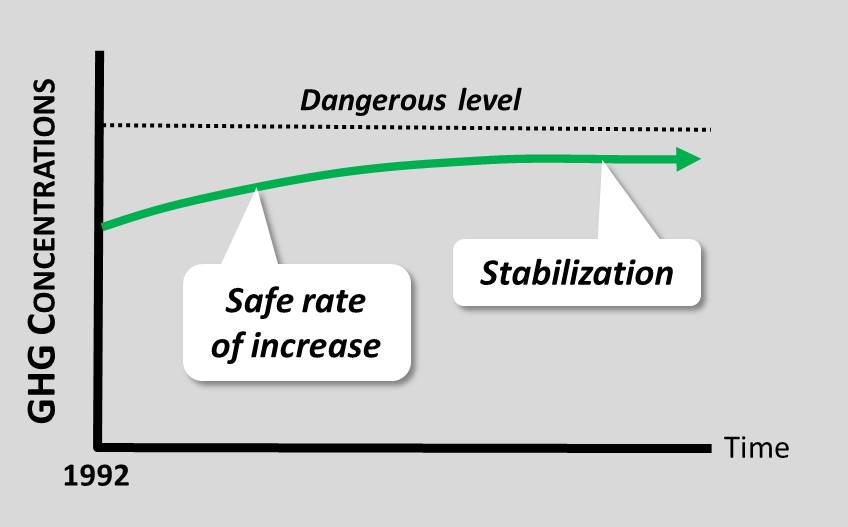

This objective explicitly states that GHG concentrations must reach a safe level, which is correct. However, it also does something else: it implicitly states that in 1992 the dangerous GHG level had not yet been reached. This is indicated by the phrase “would prevent” as well as the guideline for an acceptable rate of concentrations increase. The objective can therefore be graphically depicted as follows:

The question must be asked: Is this objective empirically and logically justified? That is, should UNFCCC Article 2 as a whole be treated as a sound basis for the IPCC’s solutions strategy? I will first answer this question from my perspective and then examine the IPCC’s interpretation as expressed in AR2.

My initial point is that the “stabilization” of GHG concentrations is irrelevant. The GHG level can go up, down, or remain constant so long as it stays within its safe range. The word seems to be used – then and now – to suggest an effective solution, but there is no logical basis for this.

Second, an acceptable rate of concentrations increase would be correct if this increase occurred entirely within the safe GHG range. If it occurs outside this range then ecosystem damage will result, no matter how gradual the increase may be.

The validity of the UNFCCC objective therefore hinges on the interpretation of “dangerous interference” as this relates to the GHG level. If the 1992 level was well below the danger point then a slow increase would pose few immediate risks, and the objective might make sense. If the 1992 level was known to be dangerous, then any increase would be perilous and the objective should be rejected.

The dictionary defines “danger” as “liability or exposure to harm or injury,” with the synonyms “risk,” “peril,” “hazard”, and “jeopardy.” It also states that “danger” refers to,”… all kinds of injury or evil consequences, either near at hand and certain, or remote and doubtful.” It further informs us that, “Dangerous applies to whatever has the power to cause harm or loss unless dealt with carefully and cautiously.” (Random House and Merriam-Webster)

The question thus becomes: did scientists in 1992 know that the existing GHG concentrations entailed this kind of harm or peril? Let’s examine the historical record and see.

In 1965 a group of prestigious scientists delivered a major report to US President Lyndon Johnson. Titled Restoring the Quality of our Environment, it noted that there had been a measurable increase in CO2 that could have “a significant effect on climate” which could be “deleterious” for humankind. In the same year a meeting headed by Roger Revelle, a climate science pioneer, concluded that the climate system is “precariously balanced.” Thus, not only was the danger from the rising GHG level clearly perceived by the mid-1960s, so was the climate system’s tendency to sudden shifts as tipping points are reached.

In 1988 the UN declaration that endorsed the IPCC’s creation stated that, “… continued growth in atmospheric concentrations of ‘greenhouse’ gases could produce global warming with an eventual rise in sea levels, the effects of which could be disastrous for mankind if timely steps are not taken at all levels …” Two years later the IPCC’s AR1 cited numerous climate threats, including the northward shift of climatic zones beyond the adaptive capacity of species, reduced water availability, increased natural hazards such as floods and droughts, sea-level rise, modified ocean circulation, and the melting of glaciers and permafrost.

Perhaps most significantly, 1992 was the year when 1,700 scientists, including 104 Nobel laureates, issued their World Scientists’ Warning to Humanity. This document alerted our species to the fact that, “Increasing levels of gases in the atmosphere from human activities, including carbon dioxide released from fossil fuel burning and from deforestation, may alter climate on a global scale. Predictions of global warming are still uncertain … but the potential risks are very great.”

For me the conclusion is inescapable: by 1992 scientists were fully aware that further increases in GHG concentrations would cause risky and thus dangerous interference with the climate system. This means that there was no justification for this aspect of the UNFCCC objective, which permitted precisely such increases. Let me now turn to the IPCC’s interpretation of this objective three years later.

The opening section of the second Assessment Report addresses Article 2 by first assigning the responsibility for determining the unsafe GHG level to policymakers – that is, by placing it outside the scientific domain. The report then tells us that the IPCC’s restricted role is, “… to provide a sound scientific basis that would enable policymakers to better interpret dangerous anthropogenic interference with the climate system.” This is followed by an initial outline of the IPCC’s strategy: to give policymakers the choice of several elevated but stabilized concentration levels, plus the net emissions pathways to achieve them. These pathways would then form the basis for climate policies such as clean energy.

The IPCC’s interpretation was badly misguided for two reasons. First, determining the unsafe GHG level is emphatically a scientific and not a policymaker responsibility. There is no social, economic, or political reasoning that could conceivably link a specific GHG level to the initiation of dangerous environmental effects. The scientific reasoning, on the other hand, is readily envisaged. Using CO2 numbers, it would go something like this:

- In 1965 the CO2 concentration was 320 ppm;

- In that year the danger from global warming had just become evident;

- The ocean’s thermal inertia delays atmospheric warming by at least 20 years;

- Twenty years earlier, in 1945, the CO2 concentration was 310 ppm;

- Thus, the maximum safe CO2 level is approximately 310 ppm.

The IPCC could have chosen a different year, considered GHG concentrations as a whole, and no doubt added much detail and depth. However, this kind of reasoning was never applied, which means that the organization evaded its scientific responsibilities.

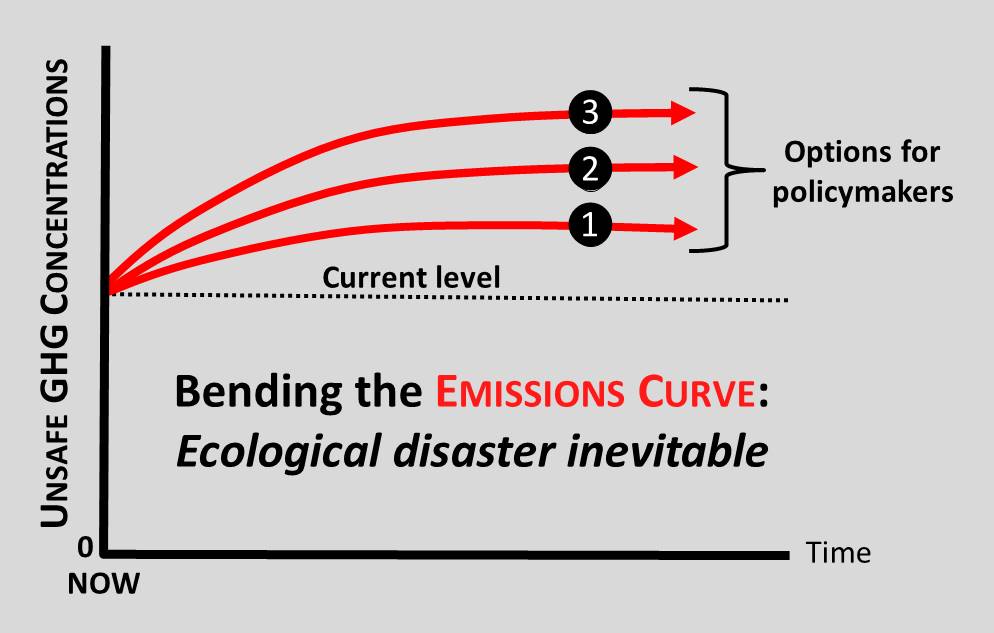

The IPCC’s interpretation was also questionable because it substituted relative success for an absolute solution. This is best explained with the help of another diagram:

Unlike the previous graph, this one has unsafe GHG concentrations on the vertical axis. The zero point is therefore the start of the GHG danger zone, and the current level is well inside it.

As emissions decline to zero at various rates (not shown), the concentrations stabilize at various levels, as depicted. The policymaker’s task is to choose a lower concentration level for the future by adopting an aggressive emissions reduction policy today. For example, clean energy innovations might shift the curve from #3 to #2, or from #2 to #1. Note, however, that these are only relative improvements. They fail to achieve the absolute goal of GHG safety, but are nevertheless treated as effective climate actions.

A crucial aspect of this scheme is that it can continue to be used as the GHG level rises. Because the GHG danger point was never specified and only relative improvements matter, deadly concentration options can be presented as rational choices far into the future. This is why graphs such as this appear in all of the IPCC’s Assessment Reports, despite the rapid increase in both concentrations and scientific understanding of the dangers involved. Presumably newer, even glossier versions of these curves are now being prepared for publication in 2021.

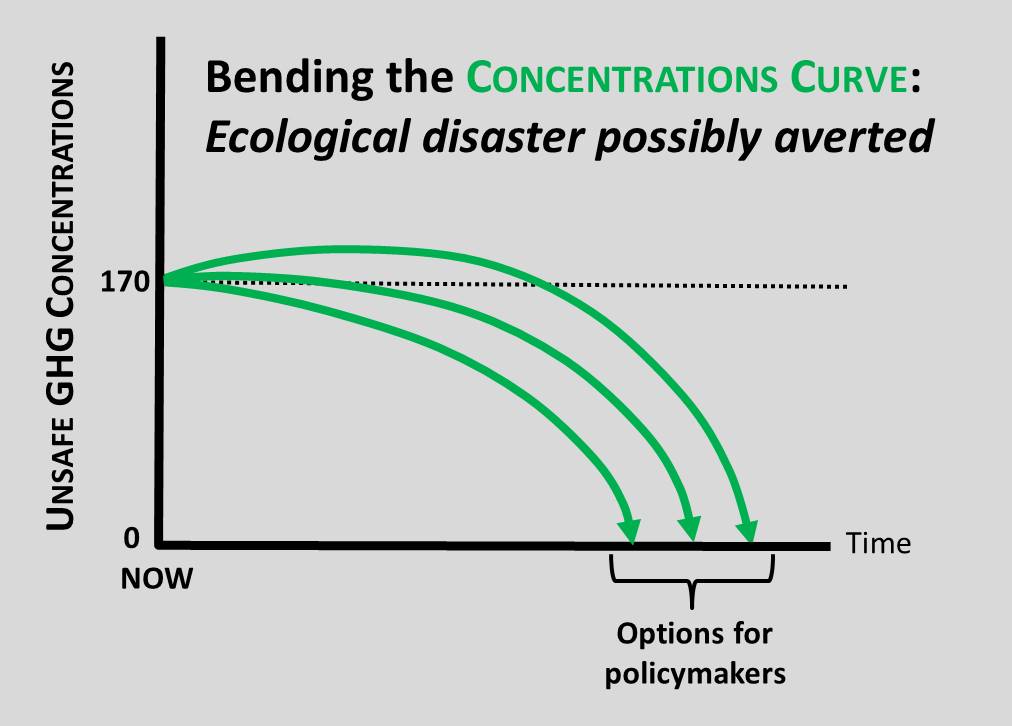

The tragic reality is that the IPCC strategy will inevitably result in ecological disaster because it bends the wrong curve: emissions instead of concentrations. The only approach that can possibly succeed is to scientifically establish the GHG danger point and then bend the concentration curve to safety through highly aggressive emission reductions and GHG removals. The prospective results are shown here:

This graph again differs from the previous one because real concentration levels are now being considered. In CO2-equivalent terms, the current GHG level is 490 ppm. In 1945, when the danger zone was likely entered, it was about 320 ppm. The difference of 170 ppm is shown on the graph. This is my crude but plausible approximation for the degree of GHG overshoot that now threatens the biosphere.

The graph also depicts the appropriate role for policymakers: choosing the optimal rate of concentrations decline. This is a difficult task because such declines will necessitate sharp reductions in both consumption and population levels. However, the task is completely impossible without the scientific specification of the GHG danger point. This is necessary for policymakers to establish the reality, gravity, and urgency of the crisis, allowing them to make well-informed decisions about the economic measures to be imposed and the social sacrifices that must be made.

To recap: In 1992 the UNFCCC implicitly stated that the GHG level could continue to rise, and in 1995 the IPCC explicitly stated that only policymakers could determine the GHG danger level. These two falsehoods became the foundation for the IPCC’s ecocidal strategy: to reduce emissions to zero rather than concentrations to their safe level.

My initial claim above was that the distortions of climate science are rooted in the IPCC strategy just discussed. Here are the key connections:

- The sloppy definitions of “global warming,” “climate change,” and “mitigation” create conceptual and semantic confusion. This makes it difficult for people to form a clear mental picture of the GHG crisis and its solutions, thereby shielding the strategy from critical scrutiny.

- The focus on emissions rather than concentrations supports the strategy’s core feature by restricting policy initiatives to emission reductions.

- The fixation on increased temperature instead of ecological damage allows the damage from the duration of elevated temperatures to be ignored. This undergirds the strategy’s pretense that stabilized concentrations, which extend such temperatures into the indefinite future, are meaningful solutions.

- The two-degree limit makes the story about stabilized concentrations credible. If the limit were either a realistic temperature or a realistic GHG level it would be obvious to all that the danger point was reached long ago. This would fatally undermine a strategy that assumes rising concentrations for decades to come. (Credibility is also the likely explanation for the switch from the UNFCCC’s concentration-based goal to today’s temperature-based goal: the concentration level keeps rising, but the two-degree limit has not yet been reached.)

- The marginalization of geoengineering achieves two ends. First, it eliminates GHG removals as a serious option, again restricting policy initiatives to emission reductions. Second, it allows solar radiation management (SRM) to be sidelined. Acknowledging the existential necessity of SRM, particularly in the Arctic, would reveal the gravity of the GHG crisis and weaken a strategy that relies crucially on the continued complacency of concerned minds.

Edits and Updates: Dec. 18/18